Project 4 – clustering

Project 4 – Matt Sloan¶

Introduce the problem¶

Introduce the project. What is the problem you are trying to solve? What questions are you trying to find answer

https://www.kaggle.com/datasets/cisautomotiveapi/large-car-dataset/download?datasetVersionNumber=4 (warning: large file)

from

https://www.kaggle.com/datasets/cisautomotiveapi/large-car-dataset

Create ipynb template for website -darkmode html command, set low jpg, ignore warnings, default imports numpy pandas traintest¶

I’m looking to see if there are specific specific features that tend to make vehicles more fuel efficient. We all know that having a smaller engine inherently means higher mpg’s… or does it? What other factors tend to be at play here? Does one manufacturer tend to specialize in this? Bonus… what cars tend to have the best price/mpg ratio?

What is clustering and how does it work?¶

With supervised machine learning, you always have a target that you’re looking for and you will have an output from your particular algorithm to compare to.

Clustering is unsupervised machine learning. In other words, you will NOT have a target to compare to. It does not predict anything. Instead, clustering is useful for breaking down your data samples into groups.

It might be helpful in some cases to categorize based on overall similarities.

In order to explain what the k-means algorithm does, imagine that one could plot one data-point vs another. If these were numerical values, you could plot all of these on the same plane. If we were to look at phone battery life vs phone price, we could end up with a plot that has several obvious groups, or clusters, of phones plotted. K-means takes a number, k, to determine how many clusters to look for. Then it tries to find a combination of k number of phones in k groups, with each group only containing the phones that were closest to it. This is a simple example, but most datasets have more than 2 variables!

Introduce the data¶

Where did you find it? What is the data about (include links)? What are the features (with additional explanations if not already self-explanatory from the name itself)?

I was looking for mpg data for vehicles that was also paired with msrp. I decided to use the dataset from fueleconomy.gov for mpg values and this… 5gb dataset from kaggle. for vehicle info.

It appears to be a sample of AutoDealerData.com of vehicle listings from Illinois from June 2018 until 2020.

I will probably remove duplicates in an attempt to save my RAM from annihilation.

This dataset has 156 features and ~5.7 million entries.

The features that I am most interested in are:

- msrp

- brandName

- modelName

- bodyClass

- DisplacementCC

- Doors

- DriveType

- EngineCylcinders

- EngineManufacturer

- MakeID

- Manufacturer

- Model

- ModelYear

- Turbo

- TransissionSpeeds

- TransmissionStyle

However I will be utilizing most of the remaining features as well.¶

And from the fueleconomy.gov dataset, I will be using:

- comb08 – combined MPG from fueltype 1 (I will be assuming the primary fuel type is normally used)

- make – manufacturer (division)

- mfrCode – 3-character manufacturer code

- model – model name (carline)

- year – model year

- displ – engine displacement in liters

I intend to use these variables to accurately append the combined mpg to the vehicle

Data Understanding/Visualization¶

Use methods to try to further understand and visualize the data. Make sure to remember your initial problems/questions when completing this step. While exploring, does anything else stand out to you (perhaps any surprising insights?)

How does this step relate to your modeling?

Let us start out by loading the file.

# for our dataframes

import pandas as pd

pd.set_option('display.max_columns', None)

# for our arrays and dtypes

import numpy as np

# for out plotting

import matplotlib.pyplot as plt

import seaborn as sns

# some plotting settings

%config InlineBackend.figure_format ='jpg'

sns.set(rc={"figure.figsize":(10, 10)})

sns.set(rc={"figure.dpi": 100})

# our ml library, scikit-learn

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

from sklearn.cluster import KMeans

from sklearn.preprocessing import StandardScaler

from sklearn.model_selection import GridSearchCV

# Let's disable warnings to make this prettier for posting

import warnings

warnings.filterwarnings('ignore')

# to export to HTML I have used jupyter nbconvert -–to html /path/to/example.ipynb –HTMLExporter.theme=dark

# these are the columns we need to keep

main_df_colms = ['brandName',

'modelName',

'vf_BodyClass',

'vf_FuelInjectionType',

'vf_Manufacturer',

'vf_PlantCity',

'vf_PlantCompanyName',

'vf_PlantCountry',

'vf_PlantState',

'vf_Series',

'vf_TransmissionSpeeds',

'vf_TransmissionStyle',

'vf_Trim',

'vf_Turbo',

'vf_DisplacementL',

'vf_Doors',

'vf_ModelID',

'vf_ModelYear',

]

main_df_cats = ['brandName',

'modelName',

'vf_BodyClass',

'vf_FuelInjectionType',

'vf_Manufacturer',

'vf_PlantCity',

'vf_PlantCompanyName',

'vf_PlantCountry',

'vf_PlantState',

'vf_Series',

'vf_Doors'

]

mpg_df_colms = ['comb08',

'make',

'mfrCode',

'model',

'year',

'displ',

'cylinders'

]

mpg_df_cats = ['make',

'model']

# main working df

main_df = pd.DataFrame()

# temp df for mpg data

mpg_df = pd.DataFrame()

######### Since I have already saved these as parquets...

# main_df

main_df = pd.read_parquet('data/archive/CIS_Automotive_Kaggle_Sample.parquet',

columns=main_df_colms,

use_nullable_dtypes = True)

main_df = main_df.replace(to_replace = '', value = pd.NA)

main_df[main_df_cats] = main_df[main_df_cats].astype(dtype=pd.CategoricalDtype())

# mpg df

mpg_df = pd.read_parquet('data/archive/vehicles.parquet',

columns=mpg_df_colms,

use_nullable_dtypes = True)

mpg_df = mpg_df.replace(to_replace = '', value = pd.NA)

mpg_df[mpg_df_cats] = mpg_df[mpg_df_cats].astype(dtype=pd.CategoricalDtype())

I had some issues trying to read it in chunks, so I chose which columns were the most important and loaded only those. Most columns had a large quantity of missing values anyways 🙁

main_df.head()

| brandName | modelName | vf_BodyClass | vf_FuelInjectionType | vf_Manufacturer | vf_PlantCity | vf_PlantCompanyName | vf_PlantCountry | vf_PlantState | vf_Series | vf_TransmissionSpeeds | vf_TransmissionStyle | vf_Trim | vf_Turbo | vf_DisplacementL | vf_Doors | vf_ModelID | vf_ModelYear | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | MITSUBISHI | Eclipse Spyder | Convertible/Cabriolet | Multipoint Fuel Injection (MPFI) | MITSUBISHI MOTORS NORTH AMERICA | BLOOMINGTON-NORMAL | <NA> | UNITED STATES (USA) | ILLINOIS | SPORTS | <NA> | <NA> | <NA> | <NA> | 3.0 | 2.0 | 2321.0 | 2002.0 |

| 1 | NISSAN | Altima | Sedan/Saloon | <NA> | NISSAN NORTH AMERICA INC | CANTON | Nissan North America Inc. | UNITED STATES (USA) | MISSISSIPPI | <NA> | <NA> | <NA> | <NA> | <NA> | 2.5 | 4.0 | 1904.0 | 2016.0 |

| 2 | FORD | Escape | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | Stoichiometric gasoline direct injection (SGDI) | FORD MOTOR COMPANY USA | LOUISVILLE | Louisville Assembly | UNITED STATES (USA) | KENTUCKY | SE | <NA> | <NA> | <NA> | <NA> | 1.6 | 4.0 | 1798.0 | 2014.0 |

| 3 | CHEVROLET | Cruze | Sedan/Saloon | <NA> | GENERAL MOTORS LLC | LORDSTOWN | GMNA | UNITED STATES (USA) | OHIO | Premier | <NA> | Automatic | <NA> | Yes | 1.4 | 4.0 | 1832.0 | 2017.0 |

| 4 | FORD | F-150 | Pickup | <NA> | FORD MOTOR COMPANY USA | DEARBORN | Dearborn | UNITED STATES (USA) | MICHIGAN | <NA> | <NA> | Automatic | <NA> | <NA> | 5.0 | <NA> | 1801.0 | 2019.0 |

mpg_df.head()

| comb08 | make | mfrCode | model | year | displ | cylinders | |

|---|---|---|---|---|---|---|---|

| 0 | 21 | Alfa Romeo | <NA> | Spider Veloce 2000 | 1985 | 2.0 | 4.0 |

| 1 | 11 | Ferrari | <NA> | Testarossa | 1985 | 4.9 | 12.0 |

| 2 | 27 | Dodge | <NA> | Charger | 1985 | 2.2 | 4.0 |

| 3 | 11 | Dodge | <NA> | B150/B250 Wagon 2WD | 1985 | 5.2 | 8.0 |

| 4 | 19 | Subaru | <NA> | Legacy AWD Turbo | 1993 | 2.2 | 4.0 |

main_df.describe()

| vf_TransmissionSpeeds | vf_DisplacementL | vf_ModelID | vf_ModelYear | |

|---|---|---|---|---|

| count | 1293522.0 | 5648866.0 | 5689172.0 | 5693733.0 |

| mean | 6.894028 | 3.295728 | 3716.957589 | 2015.304548 |

| std | 1.390092 | 6.486849 | 4513.854753 | 4.613182 |

| min | 1.0 | 0.0 | 1684.0 | 1980.0 |

| 25% | 6.0 | 2.0 | 1847.0 | 2014.0 |

| 50% | 6.0 | 2.5 | 1945.0 | 2017.0 |

| 75% | 8.0 | 3.6 | 2735.0 | 2019.0 |

| max | 10.0 | 200.0 | 27897.0 | 2021.0 |

mpg_df.describe()

| comb08 | year | displ | cylinders | |

|---|---|---|---|---|

| count | 45777.0 | 45777.0 | 45313.0 | 45311.0 |

| mean | 21.290932 | 2003.558031 | 3.280399 | 5.709916 |

| std | 9.637874 | 12.165146 | 1.356625 | 1.772264 |

| min | 7.0 | 1984.0 | 0.0 | 2.0 |

| 25% | 17.0 | 1992.0 | 2.2 | 4.0 |

| 50% | 20.0 | 2005.0 | 3.0 | 6.0 |

| 75% | 23.0 | 2014.0 | 4.2 | 6.0 |

| max | 142.0 | 2023.0 | 8.4 | 16.0 |

Nulls?

print("---------------- main df ----------------")

for col in main_df.columns:

if main_df[col].isnull().sum() > 0:

print(f'{round(((main_df[col].isnull().sum()/main_df.shape[0])*100),2)}% -----{main_df[col].isnull().sum()} null values in {col}')

print("\n---------------- mpg df ----------------")

for col in mpg_df.columns:

if mpg_df[col].isnull().sum() > 0:

print(f'{round(((mpg_df[col].isnull().sum()/mpg_df.shape[0])*100),2)}% -----{mpg_df[col].isnull().sum()} null values in {col}')

---------------- main df ---------------- 0.02% -----1260 null values in brandName 0.1% -----5843 null values in modelName 0.2% -----11559 null values in vf_BodyClass 75.4% -----4293878 null values in vf_FuelInjectionType 0.02% -----1260 null values in vf_Manufacturer 10.79% -----614448 null values in vf_PlantCity 27.44% -----1562812 null values in vf_PlantCompanyName 3.13% -----178494 null values in vf_PlantCountry 26.74% -----1522665 null values in vf_PlantState 24.66% -----1404119 null values in vf_Series 77.29% -----4401493 null values in vf_TransmissionSpeeds 66.21% -----3770877 null values in vf_TransmissionStyle 60.5% -----3445574 null values in vf_Trim 78.82% -----4488758 null values in vf_Turbo 0.81% -----46149 null values in vf_DisplacementL 13.71% -----780801 null values in vf_Doors 0.1% -----5843 null values in vf_ModelID 0.02% -----1282 null values in vf_ModelYear ---------------- mpg df ---------------- 67.3% -----30808 null values in mfrCode 1.01% -----464 null values in displ 1.02% -----466 null values in cylinders

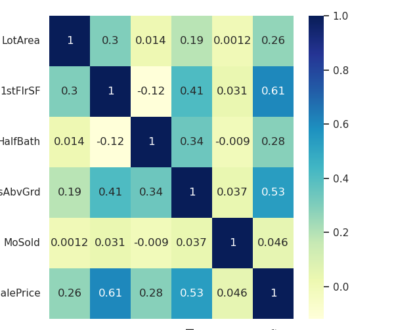

Collinearity?

sns.heatmap(main_df.corr(), cmap="YlGnBu", annot=True, fmt='.1g')

<AxesSubplot: >

sns.heatmap(mpg_df.drop(['comb08'], axis=1).corr(), cmap="YlGnBu", annot=True, fmt='.1g')

<AxesSubplot: >

Pre-processing the data¶

What pre-processing steps do you follow? Explain why you do each pre-processing step.

First I want to remove colms with a large number of missing values. Let’s remove columns missing 10% or more.

print("---------------- main df ----------------")

for col in main_df.columns:

if main_df[col].isnull().sum()/main_df.shape[0] > 0.10:

del main_df[col]

else:

print(f'{round(((main_df[col].isnull().sum()/main_df.shape[0])*100),2)}% -----{main_df[col].isnull().sum()} null values in {col}')

print("\n---------------- mpg df ----------------")

for col in mpg_df.columns:

if mpg_df[col].isnull().sum()/mpg_df.shape[0] > 0.10:

del mpg_df[col]

else:

print(f'{round(((mpg_df[col].isnull().sum()/mpg_df.shape[0])*100),2)}% -----{mpg_df[col].isnull().sum()} null values in {col}')

---------------- main df ---------------- 0.02% -----1260 null values in brandName 0.1% -----5843 null values in modelName 0.2% -----11559 null values in vf_BodyClass 0.02% -----1260 null values in vf_Manufacturer 3.13% -----178494 null values in vf_PlantCountry 0.81% -----46149 null values in vf_DisplacementL 0.1% -----5843 null values in vf_ModelID 0.02% -----1282 null values in vf_ModelYear ---------------- mpg df ---------------- 0.0% -----0 null values in comb08 0.0% -----0 null values in make 0.0% -----0 null values in model 0.0% -----0 null values in year 1.01% -----464 null values in displ 1.02% -----466 null values in cylinders

Now we’ll rid of rows with missing values

main_original_size = main_df.shape

mpg_original_size = mpg_df.shape

main_df = main_df.dropna()

mpg_df = mpg_df.dropna()

print(f'main df shape: {main_df.shape} and main df is now {round(main_df.shape[0]/main_original_size[0],3)*100}% of the original size ({main_original_size}).')

print(f'mpg df shape: {mpg_df.shape} and mpg df is now {round(mpg_df.shape[0]/mpg_original_size[0],3)*100}% of the original size ({mpg_original_size}).')

main df shape: (5466424, 8) and main df is now 96.0% of the original size ((5695015, 8)). mpg df shape: (45311, 6) and mpg df is now 99.0% of the original size ((45777, 6)).

Now since our goal is to classify vehicle features that are associated with higher mpg values, I will bin mpg into sets of 5 with higher values being higher mpg values

mpg_df['binned_mpg'] = mpg_df['comb08'].apply(lambda x: (5 * round(x/5))/5).astype(np.int32)

# also round the engine displacement

main_df['vf_DisplacementL'] = main_df['vf_DisplacementL'].round(decimals=1)

mpg_df['displ'] = mpg_df['displ'].round(decimals=1)

Finally, before we merge our df’s let us remove some duplicate rows.

main_df = main_df.drop_duplicates()

print(main_df.shape)

(15149, 8)

Let’s check it out 🙂

main_df.loc[(main_df['brandName']=='FORD') & (main_df['modelName'] == 'Explorer')]

| brandName | modelName | vf_BodyClass | vf_Manufacturer | vf_PlantCountry | vf_DisplacementL | vf_ModelID | vf_ModelYear | |

|---|---|---|---|---|---|---|---|---|

| 8 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 3.5 | 1800.0 | 2018.0 |

| 17 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 3.5 | 1800.0 | 2013.0 |

| 32 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 3.5 | 1800.0 | 2017.0 |

| 61 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 3.5 | 1800.0 | 2016.0 |

| 77 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 3.5 | 1800.0 | 2015.0 |

| … | … | … | … | … | … | … | … | … |

| 998646 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 5.0 | 1800.0 | 2001.0 |

| 1237656 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 3.7 | 1800.0 | 2017.0 |

| 1249149 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 4.0 | 1800.0 | 1994.0 |

| 1252812 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 4.0 | 1800.0 | 1993.0 |

| 2379128 | FORD | Explorer | Sport Utility Vehicle (SUV)/Multi-Purpose Vehi… | FORD MOTOR COMPANY USA | UNITED STATES (USA) | 4.0 | 1800.0 | 1992.0 |

63 rows × 8 columns

Also our mpg df has high collinearity between cylinders and displacement… surprise! (90%!) So let’s see if we can reduce some of that collinearity by replacing cylinders with displacement per cyclinder – dpc

mpg_df['disp_per_cyl'] = mpg_df['displ']/mpg_df['cylinders']

del mpg_df['cylinders']

sns.heatmap(mpg_df.drop(['comb08'], axis = 1).corr(), cmap="YlGnBu", annot=True, fmt='.1g')

<AxesSubplot: >

70% is better I suppose…

Time to add our mpg values to our main df.

- make sure brand name/manufacterur and model name are same case so we can match them

- rename our mpg_df columns so that we can inner join

# set these column names to lowercase so it's easier to match (for me)

main_df['brandName'] = main_df['brandName'].str.lower()

main_df['modelName'] = main_df['modelName'].str.lower()

mpg_df['make'] = mpg_df['make'].str.lower()

mpg_df['model'] = mpg_df['model'].str.lower()

# rename the columns in the other df so they can match with the main df

mpg_df.rename(columns = {'year': 'vf_ModelYear', 'model': 'modelName', 'make': 'brandName', 'displ': 'vf_DisplacementL'}, inplace = True)

# shape of our old df?

main_df.shape

(15149, 8)

# inner join (merge) by default - our matching columns will be our 'lock and key' to make sure our 'other' column(s) get combined

comb_df = main_df.merge(mpg_df)

# shape of our new df?

comb_df.shape

(9419, 11)

comb_df.head()

| brandName | modelName | vf_BodyClass | vf_Manufacturer | vf_PlantCountry | vf_DisplacementL | vf_ModelID | vf_ModelYear | comb08 | binned_mpg | disp_per_cyl | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | mitsubishi | eclipse spyder | Convertible/Cabriolet | MITSUBISHI MOTORS NORTH AMERICA | UNITED STATES (USA) | 3.0 | 2321.0 | 2002.0 | 21 | 4 | 0.5 |

| 1 | mitsubishi | eclipse spyder | Convertible/Cabriolet | MITSUBISHI MOTORS NORTH AMERICA | UNITED STATES (USA) | 3.0 | 2321.0 | 2002.0 | 20 | 4 | 0.5 |

| 2 | nissan | altima | Sedan/Saloon | NISSAN NORTH AMERICA INC | UNITED STATES (USA) | 2.5 | 1904.0 | 2016.0 | 31 | 6 | 0.625 |

| 3 | chevrolet | cruze | Sedan/Saloon | GENERAL MOTORS LLC | UNITED STATES (USA) | 1.4 | 1832.0 | 2017.0 | 34 | 7 | 0.35 |

| 4 | chevrolet | cruze | Sedan/Saloon | GENERAL MOTORS LLC | UNITED STATES (USA) | 1.4 | 1832.0 | 2017.0 | 32 | 6 | 0.35 |

# a little bit of code that lists all of the models for each manufacturer in our dataset :)

# for make in comb_df['brandName'].unique():

# print(make, comb_df.loc[comb_df['brandName'] == make]['modelName'].unique(), '\n')

Modeling (Clustering)¶

What model(s) do you use to try to solve your problem? Why do you choose those model(s)?

I will use random forests to predict mpg of a vechile. I believe that engine displacement will have high multicollinearity so I will try it both with and without.

As for clustering: I will be using k-means clustering to add another feature to the dataset in an attempt to increase the accuracy of the random forest model. My goal with this is to see which feature sets are consistently associated with higher mpg values.

Let us get a baseline model!

- we’ll dummy variable every ‘object’ field

- standardize our data

- train test split 80/20

- rf with n_estimators = 30

- compute our baseline score

x = comb_df.drop(['comb08','binned_mpg'], axis = 1)

y = comb_df['binned_mpg']

x.corr()

| vf_DisplacementL | vf_ModelID | vf_ModelYear | disp_per_cyl | |

|---|---|---|---|---|

| vf_DisplacementL | 1.000000 | -0.009071 | -0.050101 | 0.749481 |

| vf_ModelID | -0.009071 | 1.000000 | -0.142927 | -0.027157 |

| vf_ModelYear | -0.050101 | -0.142927 | 1.000000 | -0.055771 |

| disp_per_cyl | 0.749481 | -0.027157 | -0.055771 | 1.000000 |

sns.heatmap(x.corr(), cmap="YlGnBu", annot=True, fmt='.1g')

<AxesSubplot: >

Let’s add our dummies

dummy_colms = [colm for colm in x.columns if x[colm].dtype == 'object' or x[colm].dtype == 'string' or x[colm].dtype == 'category'] # some fancy list comprehension. Good practice

x = pd.get_dummies(data = x,

prefix = 'dummy',

columns = dummy_colms,

drop_first = True

)

dummy_colms

['brandName', 'modelName', 'vf_BodyClass', 'vf_Manufacturer', 'vf_PlantCountry']

x.dtypes

vf_DisplacementL Float64

vf_ModelID Float64

vf_ModelYear Float64

disp_per_cyl Float64

dummy_alfa romeo uint8

...

dummy_TAIWAN uint8

dummy_THAILAND uint8

dummy_TURKEY uint8

dummy_UNITED KINGDOM (UK) uint8

dummy_UNITED STATES (USA) uint8

Length: 744, dtype: object

Let’s standardize our data now.

scaler = StandardScaler()

scaler.fit(x)

x = pd.DataFrame(scaler.transform(x), columns = x.columns)

x.head()

| vf_DisplacementL | vf_ModelID | vf_ModelYear | disp_per_cyl | dummy_alfa romeo | dummy_aston martin | dummy_audi | dummy_bentley | dummy_bmw | dummy_buick | dummy_cadillac | dummy_chevrolet | dummy_chrysler | dummy_daewoo | dummy_dodge | dummy_ferrari | dummy_fiat | dummy_ford | dummy_honda | dummy_hyundai | dummy_infiniti | dummy_isuzu | dummy_jaguar | dummy_karma | dummy_kia | dummy_lamborghini | dummy_land rover | dummy_lexus | dummy_lincoln | dummy_lotus | dummy_maserati | dummy_mazda | dummy_mercedes-benz | dummy_mercury | dummy_mini | dummy_mitsubishi | dummy_nissan | dummy_oldsmobile | dummy_plymouth | dummy_pontiac | dummy_porsche | dummy_ram | dummy_saab | dummy_saturn | dummy_subaru | dummy_suzuki | dummy_toyota | dummy_volkswagen | dummy_volvo | dummy_128i | dummy_135i | dummy_164 | dummy_200 | dummy_228i | dummy_240sx | dummy_300 | dummy_3000 gt | dummy_3000 gt spyder | dummy_300zx | dummy_318is | dummy_318ti | dummy_320i | dummy_323i | dummy_323is | dummy_325ci | dummy_325i | dummy_325ix | dummy_325xi | dummy_328d | dummy_328i | dummy_328xi | dummy_330ci | dummy_330e | dummy_330i | dummy_330xi | dummy_335d | dummy_335i | dummy_335xi | dummy_340i | dummy_350z | dummy_370z | dummy_458 italia | dummy_458 speciale | dummy_458 spider | dummy_488 gtb | dummy_488 pista | dummy_488 spider | dummy_4c | dummy_500 | dummy_525i | dummy_525xi | dummy_528i | dummy_528xi | dummy_530e | dummy_530i | dummy_530xi | dummy_535d | dummy_535i | dummy_535xi | dummy_540i | dummy_545i | dummy_550i | dummy_6000 | dummy_626 | dummy_645ci | dummy_740i | dummy_740il | dummy_740li | dummy_745i | dummy_745li | dummy_750i | dummy_750li | dummy_760i | dummy_760li | dummy_812 superfast | dummy_850i | dummy_86 | dummy_9-3 | dummy_9-5 | dummy_900 | dummy_911 | dummy_944 | dummy_a3 | dummy_a4 | dummy_a6 | dummy_a6 allroad | dummy_a8 | dummy_accent | dummy_acclaim | dummy_accord | dummy_achieva | dummy_activehybrid 5 | dummy_aerio | dummy_alero | dummy_allante | dummy_allroad | dummy_altima | dummy_amanti | dummy_amg gt | dummy_arnage | dummy_arteon | dummy_ascent | dummy_aspire | dummy_atlas | dummy_atlas cross sport | dummy_ats | dummy_aura | dummy_aurora | dummy_avalon | dummy_avenger | dummy_aveo | dummy_azera | dummy_beetle | dummy_bentayga | dummy_beretta | dummy_bonneville | dummy_boxster | dummy_boxster s | dummy_breeze | dummy_brougham | dummy_brz | dummy_c-hr | dummy_c70 | dummy_cabrio | dummy_cabriolet | dummy_cadenza | dummy_california | dummy_california t | dummy_camaro | dummy_camry | dummy_camry solara | dummy_capri | dummy_caprice | dummy_cascada | dummy_catera | dummy_cavalier | dummy_cayenne | dummy_cayman | dummy_cc | dummy_celebrity | dummy_celica | dummy_century | dummy_challenger | dummy_charger | dummy_chevette | dummy_cimarron | dummy_cirrus | dummy_civic | dummy_cobalt | dummy_concorde | dummy_continental | dummy_contour | dummy_cooper | dummy_cooper clubman | dummy_cooper convertible | dummy_cooper countryman | dummy_cooper coupe | dummy_cooper paceman | dummy_cooper roadster | dummy_cooper s | dummy_cooper s clubman | dummy_cooper s convertible | dummy_cooper s countryman | dummy_cooper s coupe | dummy_cooper s paceman | dummy_cooper s roadster | dummy_corolla | dummy_corolla im | dummy_corsica | dummy_corvette | dummy_cougar | dummy_cr-z | dummy_crossfire | dummy_crown victoria | dummy_cruze | dummy_ct5 | dummy_ct6 | dummy_cts | dummy_cube | dummy_cutlass | dummy_cutlass calais | dummy_cutlass ciera | dummy_cutlass cruiser | dummy_cutlass supreme | dummy_dart | dummy_db9 | dummy_dbs | dummy_deville | dummy_diamante | dummy_discovery | dummy_discovery sport | dummy_dts | dummy_dynasty | dummy_e-pace | dummy_echo | dummy_eclipse | dummy_eclipse spyder | dummy_elantra | dummy_elantra gt | dummy_elantra touring | dummy_eldorado | dummy_elr | dummy_encore | dummy_endeavor | dummy_entourage | dummy_eos | dummy_equus | dummy_escort | dummy_evora | dummy_ex35 | dummy_f-pace | dummy_f12 berlinetta | dummy_f430 | dummy_festiva | dummy_ff | dummy_fiero | dummy_fiesta | dummy_firebird | dummy_fit | dummy_fleetwood | dummy_flying spur | dummy_focus | dummy_forenza | dummy_forte | dummy_forte koup | dummy_freelander | dummy_fusion | dummy_fx50 | dummy_g20 | dummy_g25 | dummy_g35 | dummy_g37 | dummy_g5 | dummy_g6 | dummy_g8 | dummy_galant | dummy_genesis | dummy_genesis coupe | dummy_golf | dummy_golf alltrack | dummy_golf r | dummy_golf sportwagen | dummy_grand am | dummy_grand caravan | dummy_grand marquis | dummy_grand prix | dummy_grand vitara | dummy_granturismo | dummy_gt | dummy_gt-r | dummy_gtc4lusso | dummy_gti | dummy_gto | dummy_highlander | dummy_huracan | dummy_i30 | dummy_i35 | dummy_ilx | dummy_impala | dummy_impala limited | dummy_impreza | dummy_insight | dummy_integra | dummy_intrepid | dummy_intrigue | dummy_ion | dummy_ioniq | dummy_j30 | dummy_jetta | dummy_jetta sportwagen | dummy_jetta wagon | dummy_journey | dummy_juke | dummy_k900 | dummy_kicks | dummy_kizashi | dummy_l200 | dummy_l300 | dummy_lacrosse | dummy_lancer | dummy_lancer evolution | dummy_lancer sportback | dummy_lebaron | dummy_legacy | dummy_leganza | dummy_legend | dummy_lesabre | dummy_levante | dummy_lhs | dummy_lr2 | dummy_lr3 | dummy_lr4 | dummy_ls | dummy_lucerne | dummy_lumina | dummy_lw200 | dummy_lw300 | dummy_lx 570 | dummy_m2 | dummy_m235i | dummy_m3 | dummy_m340i | dummy_m35 | dummy_m35h | dummy_m37 | dummy_m45 | dummy_m5 | dummy_m56 | dummy_m6 | dummy_macan | dummy_magnum | dummy_malibu | dummy_marauder | dummy_mark lt | dummy_maxima | dummy_mdx | dummy_metro | dummy_milan | dummy_millenia | dummy_mirage | dummy_mirage g4 | dummy_monte carlo | dummy_montero | dummy_mpv | dummy_mr2 | dummy_mulsanne | dummy_murcielago | dummy_mustang | dummy_mx-5 | dummy_mx-6 | dummy_mystique | dummy_neon | dummy_new jetta | dummy_new yorker | dummy_niro | dummy_nsx | dummy_odyssey | dummy_omni | dummy_optima | dummy_optra | dummy_pacifica | dummy_panamera | dummy_parisienne | dummy_park avenue | dummy_paseo | dummy_passat | dummy_phaeton | dummy_portofino | dummy_prelude | dummy_previa | dummy_prius | dummy_prius c | dummy_prius prime | dummy_prius v | dummy_probe | dummy_promaster city | dummy_prowler | dummy_pt cruiser | dummy_q3 | dummy_q40 | dummy_q45 | dummy_q5 | dummy_q50 | dummy_q60 | dummy_q7 | dummy_q70 | dummy_q8 | dummy_quattroporte | dummy_quest | dummy_qx30 | dummy_qx50 | dummy_r32 | dummy_r8 | dummy_rabbit | dummy_range rover | dummy_range rover evoque | dummy_range rover sport | dummy_range rover velar | dummy_rapide | dummy_rav4 | dummy_rc f | dummy_reatta | dummy_regal | dummy_reno | dummy_revero | dummy_rio | dummy_riviera | dummy_rl | dummy_rlx | dummy_roadmaster | dummy_rondo | dummy_routan | dummy_rs3 | dummy_rs4 | dummy_rs6 | dummy_rsx | dummy_rx-7 | dummy_rx-8 | dummy_s-type | dummy_s2000 | dummy_s3 | dummy_s4 | dummy_s5 | dummy_s6 | dummy_s60 | dummy_s7 | dummy_s70 | dummy_s8 | dummy_s90 | dummy_sable | dummy_sc | dummy_sebring | dummy_sedona | dummy_sentra | dummy_sephia | dummy_seville | dummy_shadow | dummy_sky | dummy_skylark | dummy_sl | dummy_solstice | dummy_sonata | dummy_sonic | dummy_soul | dummy_spark | dummy_spectra | dummy_spider | dummy_spirit | dummy_sq5 | dummy_srx | dummy_ss | dummy_stealth | dummy_stelvio | dummy_stratus | dummy_sts | dummy_sunbird | dummy_sunfire | dummy_supra | dummy_svx | dummy_sx4 | dummy_taurus | dummy_tempo | dummy_tercel | dummy_testarossa | dummy_thunderbird | dummy_tiburon | dummy_tiguan | dummy_tiguan limited | dummy_tl | dummy_tlx | dummy_topaz | dummy_toronado | dummy_touareg | dummy_town and country | dummy_town car | dummy_tracer | dummy_transit connect | dummy_trax | dummy_trooper | dummy_tsx | dummy_tt rs | dummy_urus | dummy_v40 | dummy_v8 vantage | dummy_vanden plas | dummy_vanquish | dummy_veloster | dummy_venue | dummy_venza | dummy_verano | dummy_verona | dummy_versa | dummy_vibe | dummy_viper | dummy_virage | dummy_volt | dummy_voyager | dummy_wrx | dummy_x-type | dummy_x3 | dummy_x5 | dummy_xe | dummy_xf | dummy_xj | dummy_xj12 | dummy_xj6 | dummy_xj8 | dummy_xjr | dummy_xk | dummy_xk8 | dummy_xkr | dummy_xlr | dummy_xt5 | dummy_xts | dummy_yaris | dummy_yaris ia | dummy_z8 | dummy_zephyr | dummy_Cargo Van | dummy_Convertible/Cabriolet | dummy_Coupe | dummy_Crossover Utility Vehicle (CUV) | dummy_Hatchback/Liftback/Notchback | dummy_Incomplete | dummy_Incomplete – Chassis Cab (Double Cab) | dummy_Incomplete – Chassis Cab (Number of Cab Unknown) | dummy_Incomplete – Chassis Cab (Single Cab) | dummy_Incomplete – Commercial Chassis | dummy_Incomplete – Cutaway | dummy_Incomplete – Motor Home Chassis | dummy_Incomplete – Stripped Chassis | dummy_Low Speed Vehicle (LSV) / Neighborhood Electric Vehicle (NEV) | dummy_Minivan | dummy_Motorcycle – Cruiser | dummy_Motorcycle – Custom | dummy_Motorcycle – Dual Sport / Adventure / Supermoto / On/Off-road | dummy_Motorcycle – Scooter | dummy_Motorcycle – Sport | dummy_Motorcycle – Standard | dummy_Motorcycle – Street | dummy_Motorcycle – Touring / Sport Touring | dummy_Motorcycle – Trike | dummy_Motorcycle – Unenclosed Three Wheeled / Open Autocycle | dummy_Motorcycle – Unknown Body Class | dummy_Off-road Vehicle – All Terrain Vehicle (ATV) (Motorcycle-style) | dummy_Off-road Vehicle – Dirt Bike / Off-Road | dummy_Off-road Vehicle – Motocross (Off-road short distance closed track racing) | dummy_Off-road Vehicle – Recreational Off-Road Vehicle (ROV) | dummy_Pickup | dummy_Roadster | dummy_Sedan/Saloon | dummy_Sport Utility Truck (SUT) | dummy_Sport Utility Vehicle (SUV)/Multi-Purpose Vehicle (MPV) | dummy_Step Van / Walk-in Van | dummy_Trailer | dummy_Truck | dummy_Truck-Tractor | dummy_Van | dummy_Wagon | dummy_AIRSTREAM INC. | dummy_ALFA ROMEO S.P.A. | dummy_AM GENERAL LLC | dummy_AMERICAN HONDA MOTOR CO. INC. | dummy_AMERICAN MOTORS CORP. | dummy_ASTON MARTIN LAGONDA LIMITED | dummy_AUDI | dummy_AUTO ALLIANCE INTERNATIONAL USA | dummy_AUTOMOBILI LAMBORGHINI SPA | dummy_BAD BOY ENTERPRISES | dummy_BENTLEY MOTORS LIMITED | dummy_BLUE DIAMOND TRUCK S. DE R. L. DE C. V. | dummy_BMW AG | dummy_BMW AG MOTORCYCLES | dummy_BMW M GMBH | dummy_BMW MANUFACTURER CORPORATION / BMW NORTH AMERICA | dummy_BMW OF NORTH AMERICA LLC | dummy_BOMBARDIER RECREATIONAL PRODUCTS INC | dummy_CARRIAGE INC. | dummy_CHRYSLER DE MEXICO TOLUCA | dummy_CHRYSLER GROUP LLC(USA)CHRYSLER CANADA CHRYSLER DE MEXICO | dummy_COACHMEN INDUSTRIES INC. | dummy_COASTLINE TRAILER MFG. INC. | dummy_COBRA INDUSTRIES INCORPORATED | dummy_CRUISERS MANUFACTURING INC. | dummy_DAIMLER CHRYSLER AG | dummy_DAIMLER TRUCKS NORTH AMERICA LLC | dummy_DAQING VOLVO CAR MANUFACTURING CO LTD | dummy_DETROIT CHASSIS LLC USA | dummy_DR. ING. H.C.F. PORSCHE AG | dummy_FABFORM INDUSTRIES INC. | dummy_FCA CANADA INC. | dummy_FCA ITALY S.P.A. | dummy_FCA US LLC | dummy_FELLING TRAILERS | dummy_FERRARI NORTH AMERICA INC. | dummy_FIRST UNITED INDUSTRIAL FOSHAN LIMITED | dummy_FISKER AUTOMOTIVE INC. | dummy_FLINT HILLS INDUSTRIES INC | dummy_FONTAINE COMMERCIAL PLATFORM | dummy_FORD INDIA LTD | dummy_FORD MOTOR CO OF AUSTRALIA LTD. | dummy_FORD MOTOR COMPANY MEXICO | dummy_FORD MOTOR COMPANY OF CANADA LTD. | dummy_FORD MOTOR COMPANY USA | dummy_FORD OTOMOTIV SANAYI A.S. TURKEY | dummy_FORD WERKE AG | dummy_FOREST RIVER INC. | dummy_FUJI HEAVY INDUSTRIES U.S.A. INC. (C/O SUBARU OF AMERICA) | dummy_GENERAL MOTORS LLC | dummy_GM DAEWOO AUTO & TECHNOLOGY COMPANY | dummy_HARLEY-DAVIDSON MOTOR COMPANY | dummy_HINO MOTORS LTD. | dummy_HLT LIMITED | dummy_HONDA DE MEXICO | dummy_HONDA MFG. ALABAMA. LLC. | dummy_HONDA MFG. INDIANA. LLC. | dummy_HONDA MOTOR CO. LTD | dummy_HONDA OF AMERICA MFG. INC. | dummy_HONDA OF CANADA MFG. INC. | dummy_HONDA OF THE U.K. MFG. LTD. | dummy_HYUNDAI DE MEXICO S.A. DE C.V. | dummy_HYUNDAI MOTOR CO | dummy_HYUNDAI-KIA AMERICA TECHNICAL CENTER INC (HATCI) | dummy_INDIAN MOTORCYCLE COMPANY | dummy_ISUZU MANUFACTURING SERVICES OF AMERICA INC. | dummy_ISUZU MOTORS LIMITED JAPAN | dummy_JAGUAR LAND ROVER LIMITED | dummy_KARMA AUTOMOTIVE LLC | dummy_KAWASAKI MOTORS CORP. U.S.A. | dummy_KIA MOTORS (FORD IMPORTS) | dummy_KR MOTORS CO. LTD | dummy_KTMMEX SA. DE CV. | dummy_KWANG YANG MOTOR CO. LTD (KYMCO) | dummy_LOTUS CARS LTD. | dummy_MACK CANADA INC. | dummy_MASERATI NORTH AMERICA INC. | dummy_MASERATI S.P.A. | dummy_MAZDA MOTOR CORPORATION | dummy_MAZDA MOTOR MANUFACTURING DE MEXICO S.A. DE C.V. | dummy_MCLAREN AUTOMOTIVE LIMITED | dummy_MERCEDES-BENZ AG | dummy_MERCEDES-BENZ CARS | dummy_MERCEDES-BENZ OF NORTH AMERICA INC. | dummy_MERCEDES-BENZ USA LLC (SPRINTER) | dummy_MITSUBISHI FUSO TRUCK & BUS CORPORATION | dummy_MITSUBISHI MOTORS AUSTRALIA LTD. | dummy_MITSUBISHI MOTORS CORPORATION (MMC) | dummy_MITSUBISHI MOTORS NORTH AMERICA | dummy_NAVISTAR INC. | dummy_NEW UNITED MOTOR MANUFACTURING INC. | dummy_NEWMAR CORPORATION | dummy_NISSAN MEXICANA S.A. DE C.V. | dummy_NISSAN MOTOR COMPANY LTD | dummy_NISSAN MOTOR MANUFACTURING (UK) LTD | dummy_NISSAN NORTH AMERICA INC | dummy_OLSON OWSLEY ENTERPRISES LLC DBA KOKOPELLI TRAILERS | dummy_POLAR TANK TRAILER LLC | dummy_POLARIS INDUSTRIES INC. | dummy_QUALITY CARGO TRAILERS LLC | dummy_QUATTRO GMBH | dummy_RENAULT SAMSUNG MOTORS CO. LTD | dummy_ROLLS ROYCE MOTOR CARS | dummy_SAAB CARS NORTH AMERICA INC. | dummy_SANYANG MOTOR CO. LTD. (SYM) | dummy_SHANGHAI GENERAL MOTORS CORP LTD. | dummy_SHERMAN + REILLY INC. | dummy_SUNDIRO HONDA MOTORCYCLE CO. LTD. | dummy_SUZUKI MOTOR OF AMERICA INC | dummy_TABC INC. | dummy_TESLA INC. | dummy_THE HIGHLAND GROUP | dummy_THE SHYFT GROUP | dummy_TOYOTA MOTOR CORPORATION | dummy_TOYOTA MOTOR MANUFACTURING CALIFORNIA INC. | dummy_TOYOTA MOTOR MANUFACTURING CANADA | dummy_TOYOTA MOTOR MANUFACTURING FRANCE S.A.S. | dummy_TOYOTA MOTOR MANUFACTURING INDIANA INC. | dummy_TOYOTA MOTOR MANUFACTURING KENTUCKY INC. | dummy_TOYOTA MOTOR MANUFACTURING MISSISSIPPI INC. | dummy_TOYOTA MOTOR MANUFACTURING NORTHERN KENTUCKY INC. | dummy_TOYOTA MOTOR MANUFACTURING TEXAS INC. | dummy_TOYOTA MOTOR MANUFACTURINGTURKEY INC. | dummy_TOYOTA MOTOR MFG DE BAJA CALIFORNIA S DE RL DE CV | dummy_TOYOTA MOTOR NORTH AMERICA INC | dummy_TRAILERS BY DORSEY INC | dummy_VOLKSWAGEN DE MEXICO SA DE CV | dummy_VOLKSWAGEN GROUP OF AMERICA INC. | dummy_VOLVO CAR CORPORATION | dummy_VOLVO CAR USA LLC | dummy_VOLVO GROUP NORTH AMERICA LLC | dummy_WORKHORSE CUSTOM CHASSIS | dummy_YAMAHA MOTOR CORPORATION | dummy_YAMAHA MOTOR TAIWAN CO. LTD. | dummy_AUSTRALIA | dummy_AUSTRIA | dummy_BELGIUM | dummy_BRAZIL | dummy_CANADA | dummy_CHINA | dummy_EGYPT | dummy_ENGLAND | dummy_FINLAND | dummy_FRANCE | dummy_GERMANY | dummy_HUNGARY | dummy_INDIA | dummy_INDONESIA | dummy_ITALY | dummy_JAPAN | dummy_MEXICO | dummy_NETHERLANDS | dummy_POLAND | dummy_PORTUGAL | dummy_SERBIA | dummy_SLOVAKIA | dummy_SOUTH AFRICA | dummy_SOUTH KOREA | dummy_SPAIN | dummy_SWEDEN | dummy_TAIWAN | dummy_THAILAND | dummy_TURKEY | dummy_UNITED KINGDOM (UK) | dummy_UNITED STATES (USA) | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.127717 | -0.450004 | -0.549711 | -0.311737 | -0.035716 | -0.061072 | -0.206438 | -0.039938 | -0.286828 | -0.160659 | -0.161353 | -0.334335 | -0.141544 | -0.014573 | -0.169151 | -0.08715 | -0.065306 | -0.265906 | -0.307565 | -0.212514 | -0.12935 | -0.029156 | -0.12372 | -0.014573 | -0.194107 | -0.042522 | -0.117838 | -0.020612 | -0.08715 | -0.027271 | -0.050543 | -0.101475 | -0.010304 | -0.127212 | -0.133117 | 5.358445 | -0.216306 | -0.108203 | -0.069286 | -0.205879 | -0.115972 | -0.025247 | -0.084642 | -0.085905 | -0.072315 | -0.105664 | -0.281153 | -0.255925 | -0.049476 | -0.050543 | -0.050543 | -0.01785 | -0.029156 | -0.032601 | -0.01785 | -0.042522 | -0.051588 | -0.014573 | -0.046129 | -0.01785 | -0.014573 | -0.048386 | -0.023046 | -0.014573 | -0.043757 | -0.054604 | -0.014573 | -0.032601 | -0.032601 | -0.089591 | -0.035716 | -0.034194 | -0.01785 | -0.058386 | -0.029156 | -0.01785 | -0.081401 | -0.025247 | -0.035716 | -0.050543 | -0.068508 | -0.025247 | -0.020612 | -0.025247 | -0.029156 | -0.014573 | -0.025247 | -0.020612 | -0.058386 | -0.037177 | -0.020612 | -0.044959 | -0.014573 | -0.01785 | -0.052612 | -0.020612 | -0.01785 | -0.046129 | -0.025247 | -0.046129 | -0.025247 | -0.047271 | -0.010304 | -0.046129 | -0.025247 | -0.035716 | -0.014573 | -0.023046 | -0.01785 | -0.01785 | -0.030926 | -0.025247 | -0.010304 | -0.027271 | -0.014573 | -0.014573 | -0.029156 | -0.051588 | -0.065306 | -0.014573 | -0.010304 | -0.029156 | -0.06019 | -0.096554 | -0.048386 | -0.010304 | -0.04125 | -0.078714 | -0.027271 | -0.186316 | -0.030926 | -0.014573 | -0.048386 | -0.06019 | -0.020612 | -0.020612 | -0.094288 | -0.023046 | -0.010304 | -0.01785 | -0.014573 | -0.014573 | -0.020612 | -0.027271 | -0.014573 | -0.062799 | -0.025247 | -0.027271 | -0.054604 | -0.034194 | -0.052612 | -0.038582 | -0.080068 | -0.010304 | -0.032601 | -0.053617 | -0.075935 | -0.035716 | -0.030926 | -0.023046 | -0.038582 | -0.023046 | -0.010304 | -0.038582 | -0.014573 | -0.025247 | -0.030926 | -0.029156 | -0.164444 | -0.136787 | -0.066926 | -0.025247 | -0.025247 | -0.020612 | -0.023046 | -0.111177 | -0.044959 | -0.046129 | -0.030926 | -0.030926 | -0.06019 | -0.053617 | -0.056526 | -0.051588 | -0.023046 | -0.014573 | -0.030926 | -0.198771 | -0.061941 | -0.04125 | -0.032601 | -0.052612 | -0.030926 | -0.038582 | -0.052612 | -0.04125 | -0.025247 | -0.020612 | -0.029156 | -0.032601 | -0.04125 | -0.050543 | -0.037177 | -0.029156 | -0.020612 | -0.025247 | -0.150272 | -0.020612 | -0.027271 | -0.135168 | -0.046129 | -0.035716 | -0.014573 | -0.039938 | -0.070816 | -0.014573 | -0.025247 | -0.098221 | -0.035716 | -0.01785 | -0.014573 | -0.042522 | -0.014573 | -0.052612 | -0.034194 | -0.014573 | -0.014573 | -0.037177 | -0.027271 | -0.037177 | -0.025247 | -0.034194 | -0.014573 | -0.014573 | -0.032601 | -0.095428 | 16.374196 | -0.107194 | -0.035716 | -0.020612 | -0.037177 | -0.010304 | -0.034194 | -0.014573 | -0.014573 | -0.042522 | -0.025247 | -0.074508 | -0.027271 | -0.027271 | -0.035716 | -0.014573 | -0.020612 | -0.014573 | -0.029156 | -0.020612 | -0.020612 | -0.039938 | -0.069286 | -0.023046 | -0.023046 | -0.104631 | -0.038582 | -0.090787 | -0.020612 | -0.010304 | -0.030926 | -0.010304 | -0.035716 | -0.014573 | -0.054604 | -0.052612 | -0.014573 | -0.078714 | -0.025247 | -0.06019 | -0.034194 | -0.050543 | -0.082059 | -0.025247 | -0.030926 | -0.035716 | -0.084003 | -0.043757 | -0.051588 | -0.08336 | -0.055573 | -0.035716 | -0.014573 | -0.034194 | -0.014573 | -0.058386 | -0.025247 | -0.030926 | -0.034194 | -0.032601 | -0.014573 | -0.032601 | -0.093136 | -0.010304 | -0.020612 | -0.046129 | -0.061072 | -0.058386 | -0.025247 | -0.061072 | -0.020612 | -0.014573 | -0.124602 | -0.035716 | -0.04125 | -0.030926 | -0.04125 | -0.023046 | -0.020612 | -0.029156 | -0.014573 | -0.020612 | -0.039938 | -0.088379 | -0.025247 | -0.01785 | -0.055573 | -0.037177 | -0.014573 | -0.029156 | -0.04125 | -0.01785 | -0.01785 | -0.029156 | -0.027271 | -0.027271 | -0.057464 | -0.039938 | -0.029156 | -0.014573 | -0.014573 | -0.010304 | -0.025247 | -0.035716 | -0.066926 | -0.014573 | -0.025247 | -0.014573 | -0.01785 | -0.025247 | -0.046129 | -0.01785 | -0.042522 | -0.010304 | -0.029156 | -0.079394 | -0.010304 | -0.023046 | -0.066121 | -0.014573 | -0.010304 | -0.038582 | -0.020612 | -0.050543 | -0.029156 | -0.046129 | -0.037177 | -0.030926 | -0.029156 | -0.025247 | -0.020612 | -0.180754 | -0.065306 | -0.014573 | -0.04125 | -0.075935 | -0.014573 | -0.01785 | -0.020612 | -0.020612 | -0.066926 | -0.029156 | -0.089591 | -0.014573 | -0.027271 | -0.032601 | -0.020612 | -0.054604 | -0.014573 | -0.106686 | -0.014573 | -0.014573 | -0.037177 | -0.020612 | -0.048386 | -0.029156 | -0.020612 | -0.025247 | -0.020612 | -0.025247 | -0.020612 | -0.050543 | -0.020612 | -0.010304 | -0.025247 | -0.050543 | -0.034194 | -0.032601 | -0.053617 | -0.029156 | -0.014573 | -0.030926 | -0.049476 | -0.01785 | -0.025247 | -0.014573 | -0.062799 | -0.029156 | -0.061941 | -0.030926 | -0.059295 | -0.030926 | -0.01785 | -0.043757 | -0.014573 | -0.014573 | -0.085276 | -0.029156 | -0.014573 | -0.090191 | -0.035716 | -0.027271 | -0.027271 | -0.029156 | -0.025247 | -0.025247 | -0.010304 | -0.01785 | -0.010304 | -0.035716 | -0.023046 | -0.04125 | -0.01785 | -0.029156 | -0.027271 | -0.066121 | -0.064481 | -0.029156 | -0.014573 | -0.025247 | -0.042522 | -0.032601 | -0.010304 | -0.072315 | -0.010304 | -0.078028 | -0.043757 | -0.088987 | -0.014573 | -0.039938 | -0.020612 | -0.035716 | -0.042522 | -0.020612 | -0.038582 | -0.095993 | -0.075935 | -0.065306 | -0.04125 | -0.050543 | -0.01785 | -0.027271 | -0.025247 | -0.014573 | -0.027271 | -0.051588 | -0.014573 | -0.064481 | -0.035716 | -0.025247 | -0.094288 | -0.04125 | -0.010304 | -0.046129 | -0.088379 | -0.035716 | -0.046129 | -0.014573 | -0.042522 | -0.064481 | -0.044959 | -0.010304 | -0.035716 | -0.014573 | -0.030926 | -0.01785 | -0.054604 | -0.029156 | -0.052612 | -0.032601 | -0.014573 | -0.034194 | -0.029156 | -0.058386 | -0.01785 | -0.014573 | -0.014573 | -0.052612 | -0.025247 | -0.010304 | -0.063645 | -0.014573 | -0.035716 | -0.038582 | -0.01785 | -0.084003 | -0.052612 | -0.023046 | -0.010304 | -0.030926 | -0.010304 | -0.039938 | -0.044959 | -0.029156 | -0.044959 | -0.032601 | -0.051588 | -0.039938 | -0.010304 | -0.023046 | -0.023046 | -0.020612 | -0.055573 | -0.023046 | -0.010304 | -0.025247 | -0.01785 | -0.027271 | -0.069286 | -0.020612 | -0.010304 | -0.010304 | 0.0 | 2.758567 | -0.455932 | -0.050543 | -0.364192 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.106686 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.023046 | -0.048386 | -0.950375 | 0.0 | -0.213059 | 0.0 | 0.0 | 0.0 | 0.0 | -0.052612 | -0.24637 | 0.0 | -0.035716 | 0.0 | 0.0 | 0.0 | -0.061072 | -0.190546 | -0.112636 | -0.042522 | 0.0 | -0.039938 | 0.0 | -0.29985 | 0.0 | -0.096554 | -0.04125 | 0.0 | 0.0 | 0.0 | -0.102007 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.115972 | 0.0 | -0.133117 | -0.025247 | -0.171461 | 0.0 | -0.08715 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.025247 | -0.093136 | -0.073784 | -0.262687 | -0.014573 | 0.0 | 0.0 | -0.078028 | -0.509472 | -0.014573 | 0.0 | 0.0 | 0.0 | -0.061941 | -0.061941 | -0.047271 | -0.183244 | -0.207554 | -0.128499 | -0.020612 | -0.056526 | 0.0 | -0.287478 | 0.0 | 0.0 | -0.029156 | -0.172116 | -0.014573 | 0.0 | -0.025247 | 0.0 | 0.0 | 0.0 | -0.027271 | 0.0 | -0.050543 | 0.0 | -0.093714 | -0.034194 | 0.0 | 0.0 | -0.010304 | 0.0 | 0.0 | 0.0 | -0.023046 | -0.131873 | 7.834378 | 0.0 | -0.091969 | 0.0 | -0.121939 | -0.183553 | -0.01785 | -0.114077 | 0.0 | 0.0 | 0.0 | 0.0 | -0.076639 | 0.0 | 0.0 | -0.084642 | 0.0 | -0.014573 | 0.0 | 0.0 | -0.105664 | 0.0 | 0.0 | 0.0 | 0.0 | -0.117838 | 0.0 | -0.108203 | -0.032601 | -0.030926 | -0.134351 | -0.06019 | -0.039938 | 0.0 | -0.014573 | 0.0 | -0.125913 | 0.0 | -0.01785 | -0.253799 | 0.0 | -0.049476 | 0.0 | 0.0 | 0.0 | 0.0 | -0.055573 | -0.072315 | -0.046129 | -0.059295 | -0.305492 | -0.037177 | -0.01785 | -0.144252 | -0.08336 | -0.032601 | -0.385744 | -0.046129 | 0.0 | 0.0 | -0.115501 | -0.397286 | -0.288559 | -0.053617 | -0.020612 | -0.043757 | 0.0 | -0.082712 | -0.085276 | -0.288343 | -0.020612 | -0.078714 | 0.0 | -0.046129 | -0.032601 | -0.163419 | 1.27481 |

| 1 | 0.127717 | -0.450004 | -0.549711 | -0.311737 | -0.035716 | -0.061072 | -0.206438 | -0.039938 | -0.286828 | -0.160659 | -0.161353 | -0.334335 | -0.141544 | -0.014573 | -0.169151 | -0.08715 | -0.065306 | -0.265906 | -0.307565 | -0.212514 | -0.12935 | -0.029156 | -0.12372 | -0.014573 | -0.194107 | -0.042522 | -0.117838 | -0.020612 | -0.08715 | -0.027271 | -0.050543 | -0.101475 | -0.010304 | -0.127212 | -0.133117 | 5.358445 | -0.216306 | -0.108203 | -0.069286 | -0.205879 | -0.115972 | -0.025247 | -0.084642 | -0.085905 | -0.072315 | -0.105664 | -0.281153 | -0.255925 | -0.049476 | -0.050543 | -0.050543 | -0.01785 | -0.029156 | -0.032601 | -0.01785 | -0.042522 | -0.051588 | -0.014573 | -0.046129 | -0.01785 | -0.014573 | -0.048386 | -0.023046 | -0.014573 | -0.043757 | -0.054604 | -0.014573 | -0.032601 | -0.032601 | -0.089591 | -0.035716 | -0.034194 | -0.01785 | -0.058386 | -0.029156 | -0.01785 | -0.081401 | -0.025247 | -0.035716 | -0.050543 | -0.068508 | -0.025247 | -0.020612 | -0.025247 | -0.029156 | -0.014573 | -0.025247 | -0.020612 | -0.058386 | -0.037177 | -0.020612 | -0.044959 | -0.014573 | -0.01785 | -0.052612 | -0.020612 | -0.01785 | -0.046129 | -0.025247 | -0.046129 | -0.025247 | -0.047271 | -0.010304 | -0.046129 | -0.025247 | -0.035716 | -0.014573 | -0.023046 | -0.01785 | -0.01785 | -0.030926 | -0.025247 | -0.010304 | -0.027271 | -0.014573 | -0.014573 | -0.029156 | -0.051588 | -0.065306 | -0.014573 | -0.010304 | -0.029156 | -0.06019 | -0.096554 | -0.048386 | -0.010304 | -0.04125 | -0.078714 | -0.027271 | -0.186316 | -0.030926 | -0.014573 | -0.048386 | -0.06019 | -0.020612 | -0.020612 | -0.094288 | -0.023046 | -0.010304 | -0.01785 | -0.014573 | -0.014573 | -0.020612 | -0.027271 | -0.014573 | -0.062799 | -0.025247 | -0.027271 | -0.054604 | -0.034194 | -0.052612 | -0.038582 | -0.080068 | -0.010304 | -0.032601 | -0.053617 | -0.075935 | -0.035716 | -0.030926 | -0.023046 | -0.038582 | -0.023046 | -0.010304 | -0.038582 | -0.014573 | -0.025247 | -0.030926 | -0.029156 | -0.164444 | -0.136787 | -0.066926 | -0.025247 | -0.025247 | -0.020612 | -0.023046 | -0.111177 | -0.044959 | -0.046129 | -0.030926 | -0.030926 | -0.06019 | -0.053617 | -0.056526 | -0.051588 | -0.023046 | -0.014573 | -0.030926 | -0.198771 | -0.061941 | -0.04125 | -0.032601 | -0.052612 | -0.030926 | -0.038582 | -0.052612 | -0.04125 | -0.025247 | -0.020612 | -0.029156 | -0.032601 | -0.04125 | -0.050543 | -0.037177 | -0.029156 | -0.020612 | -0.025247 | -0.150272 | -0.020612 | -0.027271 | -0.135168 | -0.046129 | -0.035716 | -0.014573 | -0.039938 | -0.070816 | -0.014573 | -0.025247 | -0.098221 | -0.035716 | -0.01785 | -0.014573 | -0.042522 | -0.014573 | -0.052612 | -0.034194 | -0.014573 | -0.014573 | -0.037177 | -0.027271 | -0.037177 | -0.025247 | -0.034194 | -0.014573 | -0.014573 | -0.032601 | -0.095428 | 16.374196 | -0.107194 | -0.035716 | -0.020612 | -0.037177 | -0.010304 | -0.034194 | -0.014573 | -0.014573 | -0.042522 | -0.025247 | -0.074508 | -0.027271 | -0.027271 | -0.035716 | -0.014573 | -0.020612 | -0.014573 | -0.029156 | -0.020612 | -0.020612 | -0.039938 | -0.069286 | -0.023046 | -0.023046 | -0.104631 | -0.038582 | -0.090787 | -0.020612 | -0.010304 | -0.030926 | -0.010304 | -0.035716 | -0.014573 | -0.054604 | -0.052612 | -0.014573 | -0.078714 | -0.025247 | -0.06019 | -0.034194 | -0.050543 | -0.082059 | -0.025247 | -0.030926 | -0.035716 | -0.084003 | -0.043757 | -0.051588 | -0.08336 | -0.055573 | -0.035716 | -0.014573 | -0.034194 | -0.014573 | -0.058386 | -0.025247 | -0.030926 | -0.034194 | -0.032601 | -0.014573 | -0.032601 | -0.093136 | -0.010304 | -0.020612 | -0.046129 | -0.061072 | -0.058386 | -0.025247 | -0.061072 | -0.020612 | -0.014573 | -0.124602 | -0.035716 | -0.04125 | -0.030926 | -0.04125 | -0.023046 | -0.020612 | -0.029156 | -0.014573 | -0.020612 | -0.039938 | -0.088379 | -0.025247 | -0.01785 | -0.055573 | -0.037177 | -0.014573 | -0.029156 | -0.04125 | -0.01785 | -0.01785 | -0.029156 | -0.027271 | -0.027271 | -0.057464 | -0.039938 | -0.029156 | -0.014573 | -0.014573 | -0.010304 | -0.025247 | -0.035716 | -0.066926 | -0.014573 | -0.025247 | -0.014573 | -0.01785 | -0.025247 | -0.046129 | -0.01785 | -0.042522 | -0.010304 | -0.029156 | -0.079394 | -0.010304 | -0.023046 | -0.066121 | -0.014573 | -0.010304 | -0.038582 | -0.020612 | -0.050543 | -0.029156 | -0.046129 | -0.037177 | -0.030926 | -0.029156 | -0.025247 | -0.020612 | -0.180754 | -0.065306 | -0.014573 | -0.04125 | -0.075935 | -0.014573 | -0.01785 | -0.020612 | -0.020612 | -0.066926 | -0.029156 | -0.089591 | -0.014573 | -0.027271 | -0.032601 | -0.020612 | -0.054604 | -0.014573 | -0.106686 | -0.014573 | -0.014573 | -0.037177 | -0.020612 | -0.048386 | -0.029156 | -0.020612 | -0.025247 | -0.020612 | -0.025247 | -0.020612 | -0.050543 | -0.020612 | -0.010304 | -0.025247 | -0.050543 | -0.034194 | -0.032601 | -0.053617 | -0.029156 | -0.014573 | -0.030926 | -0.049476 | -0.01785 | -0.025247 | -0.014573 | -0.062799 | -0.029156 | -0.061941 | -0.030926 | -0.059295 | -0.030926 | -0.01785 | -0.043757 | -0.014573 | -0.014573 | -0.085276 | -0.029156 | -0.014573 | -0.090191 | -0.035716 | -0.027271 | -0.027271 | -0.029156 | -0.025247 | -0.025247 | -0.010304 | -0.01785 | -0.010304 | -0.035716 | -0.023046 | -0.04125 | -0.01785 | -0.029156 | -0.027271 | -0.066121 | -0.064481 | -0.029156 | -0.014573 | -0.025247 | -0.042522 | -0.032601 | -0.010304 | -0.072315 | -0.010304 | -0.078028 | -0.043757 | -0.088987 | -0.014573 | -0.039938 | -0.020612 | -0.035716 | -0.042522 | -0.020612 | -0.038582 | -0.095993 | -0.075935 | -0.065306 | -0.04125 | -0.050543 | -0.01785 | -0.027271 | -0.025247 | -0.014573 | -0.027271 | -0.051588 | -0.014573 | -0.064481 | -0.035716 | -0.025247 | -0.094288 | -0.04125 | -0.010304 | -0.046129 | -0.088379 | -0.035716 | -0.046129 | -0.014573 | -0.042522 | -0.064481 | -0.044959 | -0.010304 | -0.035716 | -0.014573 | -0.030926 | -0.01785 | -0.054604 | -0.029156 | -0.052612 | -0.032601 | -0.014573 | -0.034194 | -0.029156 | -0.058386 | -0.01785 | -0.014573 | -0.014573 | -0.052612 | -0.025247 | -0.010304 | -0.063645 | -0.014573 | -0.035716 | -0.038582 | -0.01785 | -0.084003 | -0.052612 | -0.023046 | -0.010304 | -0.030926 | -0.010304 | -0.039938 | -0.044959 | -0.029156 | -0.044959 | -0.032601 | -0.051588 | -0.039938 | -0.010304 | -0.023046 | -0.023046 | -0.020612 | -0.055573 | -0.023046 | -0.010304 | -0.025247 | -0.01785 | -0.027271 | -0.069286 | -0.020612 | -0.010304 | -0.010304 | 0.0 | 2.758567 | -0.455932 | -0.050543 | -0.364192 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.106686 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.023046 | -0.048386 | -0.950375 | 0.0 | -0.213059 | 0.0 | 0.0 | 0.0 | 0.0 | -0.052612 | -0.24637 | 0.0 | -0.035716 | 0.0 | 0.0 | 0.0 | -0.061072 | -0.190546 | -0.112636 | -0.042522 | 0.0 | -0.039938 | 0.0 | -0.29985 | 0.0 | -0.096554 | -0.04125 | 0.0 | 0.0 | 0.0 | -0.102007 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.115972 | 0.0 | -0.133117 | -0.025247 | -0.171461 | 0.0 | -0.08715 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.025247 | -0.093136 | -0.073784 | -0.262687 | -0.014573 | 0.0 | 0.0 | -0.078028 | -0.509472 | -0.014573 | 0.0 | 0.0 | 0.0 | -0.061941 | -0.061941 | -0.047271 | -0.183244 | -0.207554 | -0.128499 | -0.020612 | -0.056526 | 0.0 | -0.287478 | 0.0 | 0.0 | -0.029156 | -0.172116 | -0.014573 | 0.0 | -0.025247 | 0.0 | 0.0 | 0.0 | -0.027271 | 0.0 | -0.050543 | 0.0 | -0.093714 | -0.034194 | 0.0 | 0.0 | -0.010304 | 0.0 | 0.0 | 0.0 | -0.023046 | -0.131873 | 7.834378 | 0.0 | -0.091969 | 0.0 | -0.121939 | -0.183553 | -0.01785 | -0.114077 | 0.0 | 0.0 | 0.0 | 0.0 | -0.076639 | 0.0 | 0.0 | -0.084642 | 0.0 | -0.014573 | 0.0 | 0.0 | -0.105664 | 0.0 | 0.0 | 0.0 | 0.0 | -0.117838 | 0.0 | -0.108203 | -0.032601 | -0.030926 | -0.134351 | -0.06019 | -0.039938 | 0.0 | -0.014573 | 0.0 | -0.125913 | 0.0 | -0.01785 | -0.253799 | 0.0 | -0.049476 | 0.0 | 0.0 | 0.0 | 0.0 | -0.055573 | -0.072315 | -0.046129 | -0.059295 | -0.305492 | -0.037177 | -0.01785 | -0.144252 | -0.08336 | -0.032601 | -0.385744 | -0.046129 | 0.0 | 0.0 | -0.115501 | -0.397286 | -0.288559 | -0.053617 | -0.020612 | -0.043757 | 0.0 | -0.082712 | -0.085276 | -0.288343 | -0.020612 | -0.078714 | 0.0 | -0.046129 | -0.032601 | -0.163419 | 1.27481 |

| 2 | -0.297058 | -0.567329 | 1.133125 | 1.104952 | -0.035716 | -0.061072 | -0.206438 | -0.039938 | -0.286828 | -0.160659 | -0.161353 | -0.334335 | -0.141544 | -0.014573 | -0.169151 | -0.08715 | -0.065306 | -0.265906 | -0.307565 | -0.212514 | -0.12935 | -0.029156 | -0.12372 | -0.014573 | -0.194107 | -0.042522 | -0.117838 | -0.020612 | -0.08715 | -0.027271 | -0.050543 | -0.101475 | -0.010304 | -0.127212 | -0.133117 | -0.186621 | 4.623086 | -0.108203 | -0.069286 | -0.205879 | -0.115972 | -0.025247 | -0.084642 | -0.085905 | -0.072315 | -0.105664 | -0.281153 | -0.255925 | -0.049476 | -0.050543 | -0.050543 | -0.01785 | -0.029156 | -0.032601 | -0.01785 | -0.042522 | -0.051588 | -0.014573 | -0.046129 | -0.01785 | -0.014573 | -0.048386 | -0.023046 | -0.014573 | -0.043757 | -0.054604 | -0.014573 | -0.032601 | -0.032601 | -0.089591 | -0.035716 | -0.034194 | -0.01785 | -0.058386 | -0.029156 | -0.01785 | -0.081401 | -0.025247 | -0.035716 | -0.050543 | -0.068508 | -0.025247 | -0.020612 | -0.025247 | -0.029156 | -0.014573 | -0.025247 | -0.020612 | -0.058386 | -0.037177 | -0.020612 | -0.044959 | -0.014573 | -0.01785 | -0.052612 | -0.020612 | -0.01785 | -0.046129 | -0.025247 | -0.046129 | -0.025247 | -0.047271 | -0.010304 | -0.046129 | -0.025247 | -0.035716 | -0.014573 | -0.023046 | -0.01785 | -0.01785 | -0.030926 | -0.025247 | -0.010304 | -0.027271 | -0.014573 | -0.014573 | -0.029156 | -0.051588 | -0.065306 | -0.014573 | -0.010304 | -0.029156 | -0.06019 | -0.096554 | -0.048386 | -0.010304 | -0.04125 | -0.078714 | -0.027271 | -0.186316 | -0.030926 | -0.014573 | -0.048386 | -0.06019 | -0.020612 | -0.020612 | 10.605750 | -0.023046 | -0.010304 | -0.01785 | -0.014573 | -0.014573 | -0.020612 | -0.027271 | -0.014573 | -0.062799 | -0.025247 | -0.027271 | -0.054604 | -0.034194 | -0.052612 | -0.038582 | -0.080068 | -0.010304 | -0.032601 | -0.053617 | -0.075935 | -0.035716 | -0.030926 | -0.023046 | -0.038582 | -0.023046 | -0.010304 | -0.038582 | -0.014573 | -0.025247 | -0.030926 | -0.029156 | -0.164444 | -0.136787 | -0.066926 | -0.025247 | -0.025247 | -0.020612 | -0.023046 | -0.111177 | -0.044959 | -0.046129 | -0.030926 | -0.030926 | -0.06019 | -0.053617 | -0.056526 | -0.051588 | -0.023046 | -0.014573 | -0.030926 | -0.198771 | -0.061941 | -0.04125 | -0.032601 | -0.052612 | -0.030926 | -0.038582 | -0.052612 | -0.04125 | -0.025247 | -0.020612 | -0.029156 | -0.032601 | -0.04125 | -0.050543 | -0.037177 | -0.029156 | -0.020612 | -0.025247 | -0.150272 | -0.020612 | -0.027271 | -0.135168 | -0.046129 | -0.035716 | -0.014573 | -0.039938 | -0.070816 | -0.014573 | -0.025247 | -0.098221 | -0.035716 | -0.01785 | -0.014573 | -0.042522 | -0.014573 | -0.052612 | -0.034194 | -0.014573 | -0.014573 | -0.037177 | -0.027271 | -0.037177 | -0.025247 | -0.034194 | -0.014573 | -0.014573 | -0.032601 | -0.095428 | -0.061072 | -0.107194 | -0.035716 | -0.020612 | -0.037177 | -0.010304 | -0.034194 | -0.014573 | -0.014573 | -0.042522 | -0.025247 | -0.074508 | -0.027271 | -0.027271 | -0.035716 | -0.014573 | -0.020612 | -0.014573 | -0.029156 | -0.020612 | -0.020612 | -0.039938 | -0.069286 | -0.023046 | -0.023046 | -0.104631 | -0.038582 | -0.090787 | -0.020612 | -0.010304 | -0.030926 | -0.010304 | -0.035716 | -0.014573 | -0.054604 | -0.052612 | -0.014573 | -0.078714 | -0.025247 | -0.06019 | -0.034194 | -0.050543 | -0.082059 | -0.025247 | -0.030926 | -0.035716 | -0.084003 | -0.043757 | -0.051588 | -0.08336 | -0.055573 | -0.035716 | -0.014573 | -0.034194 | -0.014573 | -0.058386 | -0.025247 | -0.030926 | -0.034194 | -0.032601 | -0.014573 | -0.032601 | -0.093136 | -0.010304 | -0.020612 | -0.046129 | -0.061072 | -0.058386 | -0.025247 | -0.061072 | -0.020612 | -0.014573 | -0.124602 | -0.035716 | -0.04125 | -0.030926 | -0.04125 | -0.023046 | -0.020612 | -0.029156 | -0.014573 | -0.020612 | -0.039938 | -0.088379 | -0.025247 | -0.01785 | -0.055573 | -0.037177 | -0.014573 | -0.029156 | -0.04125 | -0.01785 | -0.01785 | -0.029156 | -0.027271 | -0.027271 | -0.057464 | -0.039938 | -0.029156 | -0.014573 | -0.014573 | -0.010304 | -0.025247 | -0.035716 | -0.066926 | -0.014573 | -0.025247 | -0.014573 | -0.01785 | -0.025247 | -0.046129 | -0.01785 | -0.042522 | -0.010304 | -0.029156 | -0.079394 | -0.010304 | -0.023046 | -0.066121 | -0.014573 | -0.010304 | -0.038582 | -0.020612 | -0.050543 | -0.029156 | -0.046129 | -0.037177 | -0.030926 | -0.029156 | -0.025247 | -0.020612 | -0.180754 | -0.065306 | -0.014573 | -0.04125 | -0.075935 | -0.014573 | -0.01785 | -0.020612 | -0.020612 | -0.066926 | -0.029156 | -0.089591 | -0.014573 | -0.027271 | -0.032601 | -0.020612 | -0.054604 | -0.014573 | -0.106686 | -0.014573 | -0.014573 | -0.037177 | -0.020612 | -0.048386 | -0.029156 | -0.020612 | -0.025247 | -0.020612 | -0.025247 | -0.020612 | -0.050543 | -0.020612 | -0.010304 | -0.025247 | -0.050543 | -0.034194 | -0.032601 | -0.053617 | -0.029156 | -0.014573 | -0.030926 | -0.049476 | -0.01785 | -0.025247 | -0.014573 | -0.062799 | -0.029156 | -0.061941 | -0.030926 | -0.059295 | -0.030926 | -0.01785 | -0.043757 | -0.014573 | -0.014573 | -0.085276 | -0.029156 | -0.014573 | -0.090191 | -0.035716 | -0.027271 | -0.027271 | -0.029156 | -0.025247 | -0.025247 | -0.010304 | -0.01785 | -0.010304 | -0.035716 | -0.023046 | -0.04125 | -0.01785 | -0.029156 | -0.027271 | -0.066121 | -0.064481 | -0.029156 | -0.014573 | -0.025247 | -0.042522 | -0.032601 | -0.010304 | -0.072315 | -0.010304 | -0.078028 | -0.043757 | -0.088987 | -0.014573 | -0.039938 | -0.020612 | -0.035716 | -0.042522 | -0.020612 | -0.038582 | -0.095993 | -0.075935 | -0.065306 | -0.04125 | -0.050543 | -0.01785 | -0.027271 | -0.025247 | -0.014573 | -0.027271 | -0.051588 | -0.014573 | -0.064481 | -0.035716 | -0.025247 | -0.094288 | -0.04125 | -0.010304 | -0.046129 | -0.088379 | -0.035716 | -0.046129 | -0.014573 | -0.042522 | -0.064481 | -0.044959 | -0.010304 | -0.035716 | -0.014573 | -0.030926 | -0.01785 | -0.054604 | -0.029156 | -0.052612 | -0.032601 | -0.014573 | -0.034194 | -0.029156 | -0.058386 | -0.01785 | -0.014573 | -0.014573 | -0.052612 | -0.025247 | -0.010304 | -0.063645 | -0.014573 | -0.035716 | -0.038582 | -0.01785 | -0.084003 | -0.052612 | -0.023046 | -0.010304 | -0.030926 | -0.010304 | -0.039938 | -0.044959 | -0.029156 | -0.044959 | -0.032601 | -0.051588 | -0.039938 | -0.010304 | -0.023046 | -0.023046 | -0.020612 | -0.055573 | -0.023046 | -0.010304 | -0.025247 | -0.01785 | -0.027271 | -0.069286 | -0.020612 | -0.010304 | -0.010304 | 0.0 | -0.362507 | -0.455932 | -0.050543 | -0.364192 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.106686 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.023046 | -0.048386 | 1.052216 | 0.0 | -0.213059 | 0.0 | 0.0 | 0.0 | 0.0 | -0.052612 | -0.24637 | 0.0 | -0.035716 | 0.0 | 0.0 | 0.0 | -0.061072 | -0.190546 | -0.112636 | -0.042522 | 0.0 | -0.039938 | 0.0 | -0.29985 | 0.0 | -0.096554 | -0.04125 | 0.0 | 0.0 | 0.0 | -0.102007 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.115972 | 0.0 | -0.133117 | -0.025247 | -0.171461 | 0.0 | -0.08715 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.025247 | -0.093136 | -0.073784 | -0.262687 | -0.014573 | 0.0 | 0.0 | -0.078028 | -0.509472 | -0.014573 | 0.0 | 0.0 | 0.0 | -0.061941 | -0.061941 | -0.047271 | -0.183244 | -0.207554 | -0.128499 | -0.020612 | -0.056526 | 0.0 | -0.287478 | 0.0 | 0.0 | -0.029156 | -0.172116 | -0.014573 | 0.0 | -0.025247 | 0.0 | 0.0 | 0.0 | -0.027271 | 0.0 | -0.050543 | 0.0 | -0.093714 | -0.034194 | 0.0 | 0.0 | -0.010304 | 0.0 | 0.0 | 0.0 | -0.023046 | -0.131873 | -0.127643 | 0.0 | -0.091969 | 0.0 | -0.121939 | -0.183553 | -0.01785 | 8.766013 | 0.0 | 0.0 | 0.0 | 0.0 | -0.076639 | 0.0 | 0.0 | -0.084642 | 0.0 | -0.014573 | 0.0 | 0.0 | -0.105664 | 0.0 | 0.0 | 0.0 | 0.0 | -0.117838 | 0.0 | -0.108203 | -0.032601 | -0.030926 | -0.134351 | -0.06019 | -0.039938 | 0.0 | -0.014573 | 0.0 | -0.125913 | 0.0 | -0.01785 | -0.253799 | 0.0 | -0.049476 | 0.0 | 0.0 | 0.0 | 0.0 | -0.055573 | -0.072315 | -0.046129 | -0.059295 | -0.305492 | -0.037177 | -0.01785 | -0.144252 | -0.08336 | -0.032601 | -0.385744 | -0.046129 | 0.0 | 0.0 | -0.115501 | -0.397286 | -0.288559 | -0.053617 | -0.020612 | -0.043757 | 0.0 | -0.082712 | -0.085276 | -0.288343 | -0.020612 | -0.078714 | 0.0 | -0.046129 | -0.032601 | -0.163419 | 1.27481 |

| 3 | -1.231564 | -0.587587 | 1.253328 | -2.011763 | -0.035716 | -0.061072 | -0.206438 | -0.039938 | -0.286828 | -0.160659 | -0.161353 | 2.991011 | -0.141544 | -0.014573 | -0.169151 | -0.08715 | -0.065306 | -0.265906 | -0.307565 | -0.212514 | -0.12935 | -0.029156 | -0.12372 | -0.014573 | -0.194107 | -0.042522 | -0.117838 | -0.020612 | -0.08715 | -0.027271 | -0.050543 | -0.101475 | -0.010304 | -0.127212 | -0.133117 | -0.186621 | -0.216306 | -0.108203 | -0.069286 | -0.205879 | -0.115972 | -0.025247 | -0.084642 | -0.085905 | -0.072315 | -0.105664 | -0.281153 | -0.255925 | -0.049476 | -0.050543 | -0.050543 | -0.01785 | -0.029156 | -0.032601 | -0.01785 | -0.042522 | -0.051588 | -0.014573 | -0.046129 | -0.01785 | -0.014573 | -0.048386 | -0.023046 | -0.014573 | -0.043757 | -0.054604 | -0.014573 | -0.032601 | -0.032601 | -0.089591 | -0.035716 | -0.034194 | -0.01785 | -0.058386 | -0.029156 | -0.01785 | -0.081401 | -0.025247 | -0.035716 | -0.050543 | -0.068508 | -0.025247 | -0.020612 | -0.025247 | -0.029156 | -0.014573 | -0.025247 | -0.020612 | -0.058386 | -0.037177 | -0.020612 | -0.044959 | -0.014573 | -0.01785 | -0.052612 | -0.020612 | -0.01785 | -0.046129 | -0.025247 | -0.046129 | -0.025247 | -0.047271 | -0.010304 | -0.046129 | -0.025247 | -0.035716 | -0.014573 | -0.023046 | -0.01785 | -0.01785 | -0.030926 | -0.025247 | -0.010304 | -0.027271 | -0.014573 | -0.014573 | -0.029156 | -0.051588 | -0.065306 | -0.014573 | -0.010304 | -0.029156 | -0.06019 | -0.096554 | -0.048386 | -0.010304 | -0.04125 | -0.078714 | -0.027271 | -0.186316 | -0.030926 | -0.014573 | -0.048386 | -0.06019 | -0.020612 | -0.020612 | -0.094288 | -0.023046 | -0.010304 | -0.01785 | -0.014573 | -0.014573 | -0.020612 | -0.027271 | -0.014573 | -0.062799 | -0.025247 | -0.027271 | -0.054604 | -0.034194 | -0.052612 | -0.038582 | -0.080068 | -0.010304 | -0.032601 | -0.053617 | -0.075935 | -0.035716 | -0.030926 | -0.023046 | -0.038582 | -0.023046 | -0.010304 | -0.038582 | -0.014573 | -0.025247 | -0.030926 | -0.029156 | -0.164444 | -0.136787 | -0.066926 | -0.025247 | -0.025247 | -0.020612 | -0.023046 | -0.111177 | -0.044959 | -0.046129 | -0.030926 | -0.030926 | -0.06019 | -0.053617 | -0.056526 | -0.051588 | -0.023046 | -0.014573 | -0.030926 | -0.198771 | -0.061941 | -0.04125 | -0.032601 | -0.052612 | -0.030926 | -0.038582 | -0.052612 | -0.04125 | -0.025247 | -0.020612 | -0.029156 | -0.032601 | -0.04125 | -0.050543 | -0.037177 | -0.029156 | -0.020612 | -0.025247 | -0.150272 | -0.020612 | -0.027271 | -0.135168 | -0.046129 | -0.035716 | -0.014573 | -0.039938 | 14.121057 | -0.014573 | -0.025247 | -0.098221 | -0.035716 | -0.01785 | -0.014573 | -0.042522 | -0.014573 | -0.052612 | -0.034194 | -0.014573 | -0.014573 | -0.037177 | -0.027271 | -0.037177 | -0.025247 | -0.034194 | -0.014573 | -0.014573 | -0.032601 | -0.095428 | -0.061072 | -0.107194 | -0.035716 | -0.020612 | -0.037177 | -0.010304 | -0.034194 | -0.014573 | -0.014573 | -0.042522 | -0.025247 | -0.074508 | -0.027271 | -0.027271 | -0.035716 | -0.014573 | -0.020612 | -0.014573 | -0.029156 | -0.020612 | -0.020612 | -0.039938 | -0.069286 | -0.023046 | -0.023046 | -0.104631 | -0.038582 | -0.090787 | -0.020612 | -0.010304 | -0.030926 | -0.010304 | -0.035716 | -0.014573 | -0.054604 | -0.052612 | -0.014573 | -0.078714 | -0.025247 | -0.06019 | -0.034194 | -0.050543 | -0.082059 | -0.025247 | -0.030926 | -0.035716 | -0.084003 | -0.043757 | -0.051588 | -0.08336 | -0.055573 | -0.035716 | -0.014573 | -0.034194 | -0.014573 | -0.058386 | -0.025247 | -0.030926 | -0.034194 | -0.032601 | -0.014573 | -0.032601 | -0.093136 | -0.010304 | -0.020612 | -0.046129 | -0.061072 | -0.058386 | -0.025247 | -0.061072 | -0.020612 | -0.014573 | -0.124602 | -0.035716 | -0.04125 | -0.030926 | -0.04125 | -0.023046 | -0.020612 | -0.029156 | -0.014573 | -0.020612 | -0.039938 | -0.088379 | -0.025247 | -0.01785 | -0.055573 | -0.037177 | -0.014573 | -0.029156 | -0.04125 | -0.01785 | -0.01785 | -0.029156 | -0.027271 | -0.027271 | -0.057464 | -0.039938 | -0.029156 | -0.014573 | -0.014573 | -0.010304 | -0.025247 | -0.035716 | -0.066926 | -0.014573 | -0.025247 | -0.014573 | -0.01785 | -0.025247 | -0.046129 | -0.01785 | -0.042522 | -0.010304 | -0.029156 | -0.079394 | -0.010304 | -0.023046 | -0.066121 | -0.014573 | -0.010304 | -0.038582 | -0.020612 | -0.050543 | -0.029156 | -0.046129 | -0.037177 | -0.030926 | -0.029156 | -0.025247 | -0.020612 | -0.180754 | -0.065306 | -0.014573 | -0.04125 | -0.075935 | -0.014573 | -0.01785 | -0.020612 | -0.020612 | -0.066926 | -0.029156 | -0.089591 | -0.014573 | -0.027271 | -0.032601 | -0.020612 | -0.054604 | -0.014573 | -0.106686 | -0.014573 | -0.014573 | -0.037177 | -0.020612 | -0.048386 | -0.029156 | -0.020612 | -0.025247 | -0.020612 | -0.025247 | -0.020612 | -0.050543 | -0.020612 | -0.010304 | -0.025247 | -0.050543 | -0.034194 | -0.032601 | -0.053617 | -0.029156 | -0.014573 | -0.030926 | -0.049476 | -0.01785 | -0.025247 | -0.014573 | -0.062799 | -0.029156 | -0.061941 | -0.030926 | -0.059295 | -0.030926 | -0.01785 | -0.043757 | -0.014573 | -0.014573 | -0.085276 | -0.029156 | -0.014573 | -0.090191 | -0.035716 | -0.027271 | -0.027271 | -0.029156 | -0.025247 | -0.025247 | -0.010304 | -0.01785 | -0.010304 | -0.035716 | -0.023046 | -0.04125 | -0.01785 | -0.029156 | -0.027271 | -0.066121 | -0.064481 | -0.029156 | -0.014573 | -0.025247 | -0.042522 | -0.032601 | -0.010304 | -0.072315 | -0.010304 | -0.078028 | -0.043757 | -0.088987 | -0.014573 | -0.039938 | -0.020612 | -0.035716 | -0.042522 | -0.020612 | -0.038582 | -0.095993 | -0.075935 | -0.065306 | -0.04125 | -0.050543 | -0.01785 | -0.027271 | -0.025247 | -0.014573 | -0.027271 | -0.051588 | -0.014573 | -0.064481 | -0.035716 | -0.025247 | -0.094288 | -0.04125 | -0.010304 | -0.046129 | -0.088379 | -0.035716 | -0.046129 | -0.014573 | -0.042522 | -0.064481 | -0.044959 | -0.010304 | -0.035716 | -0.014573 | -0.030926 | -0.01785 | -0.054604 | -0.029156 | -0.052612 | -0.032601 | -0.014573 | -0.034194 | -0.029156 | -0.058386 | -0.01785 | -0.014573 | -0.014573 | -0.052612 | -0.025247 | -0.010304 | -0.063645 | -0.014573 | -0.035716 | -0.038582 | -0.01785 | -0.084003 | -0.052612 | -0.023046 | -0.010304 | -0.030926 | -0.010304 | -0.039938 | -0.044959 | -0.029156 | -0.044959 | -0.032601 | -0.051588 | -0.039938 | -0.010304 | -0.023046 | -0.023046 | -0.020612 | -0.055573 | -0.023046 | -0.010304 | -0.025247 | -0.01785 | -0.027271 | -0.069286 | -0.020612 | -0.010304 | -0.010304 | 0.0 | -0.362507 | -0.455932 | -0.050543 | -0.364192 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.106686 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.023046 | -0.048386 | 1.052216 | 0.0 | -0.213059 | 0.0 | 0.0 | 0.0 | 0.0 | -0.052612 | -0.24637 | 0.0 | -0.035716 | 0.0 | 0.0 | 0.0 | -0.061072 | -0.190546 | -0.112636 | -0.042522 | 0.0 | -0.039938 | 0.0 | -0.29985 | 0.0 | -0.096554 | -0.04125 | 0.0 | 0.0 | 0.0 | -0.102007 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.115972 | 0.0 | -0.133117 | -0.025247 | -0.171461 | 0.0 | -0.08715 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.025247 | -0.093136 | -0.073784 | -0.262687 | -0.014573 | 0.0 | 0.0 | -0.078028 | 1.962818 | -0.014573 | 0.0 | 0.0 | 0.0 | -0.061941 | -0.061941 | -0.047271 | -0.183244 | -0.207554 | -0.128499 | -0.020612 | -0.056526 | 0.0 | -0.287478 | 0.0 | 0.0 | -0.029156 | -0.172116 | -0.014573 | 0.0 | -0.025247 | 0.0 | 0.0 | 0.0 | -0.027271 | 0.0 | -0.050543 | 0.0 | -0.093714 | -0.034194 | 0.0 | 0.0 | -0.010304 | 0.0 | 0.0 | 0.0 | -0.023046 | -0.131873 | -0.127643 | 0.0 | -0.091969 | 0.0 | -0.121939 | -0.183553 | -0.01785 | -0.114077 | 0.0 | 0.0 | 0.0 | 0.0 | -0.076639 | 0.0 | 0.0 | -0.084642 | 0.0 | -0.014573 | 0.0 | 0.0 | -0.105664 | 0.0 | 0.0 | 0.0 | 0.0 | -0.117838 | 0.0 | -0.108203 | -0.032601 | -0.030926 | -0.134351 | -0.06019 | -0.039938 | 0.0 | -0.014573 | 0.0 | -0.125913 | 0.0 | -0.01785 | -0.253799 | 0.0 | -0.049476 | 0.0 | 0.0 | 0.0 | 0.0 | -0.055573 | -0.072315 | -0.046129 | -0.059295 | -0.305492 | -0.037177 | -0.01785 | -0.144252 | -0.08336 | -0.032601 | -0.385744 | -0.046129 | 0.0 | 0.0 | -0.115501 | -0.397286 | -0.288559 | -0.053617 | -0.020612 | -0.043757 | 0.0 | -0.082712 | -0.085276 | -0.288343 | -0.020612 | -0.078714 | 0.0 | -0.046129 | -0.032601 | -0.163419 | 1.27481 |